|

While this fixes the issue of having a more predictable distribution of values, it’s almost never used, because you lose a lot of information by squishing all nuance out of the network, so to speak.

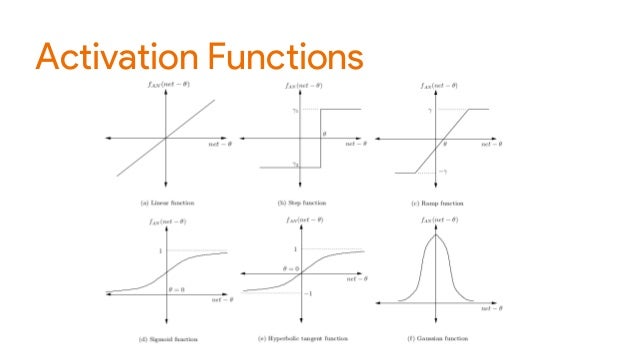

A somewhat more effective, but still super simple way to tackle this problem is the step activation function, an illustration of which can be found below.Īs one can see, all the step activation function does is take the input, and assign it to either 0 or 1, depending on whether the input is larger or smaller than 0. Of course, this wouldn’t be of much use as it literally doesn’t do anything, and so we would still face the aforementioned problem of an unpredictable distribution of values, destabilizing the training of our network.

With this in mind, what does a real-world activation function look like? Perhaps the simplest activation function one could think of is the identity function, which corresponds to not changing the inputs in any way.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed